Designed experiments play a crucial role in quality improvement. This post will introduce the essential concepts involved, and it’ll contrast the statistically designed experiment.

- What is Design of Experiment (DOE)?

- Key concepts & terminology

- Barriers and pitfalls

- Steps to conduct a DOE

- Main effects and interactions

- Key Benefits

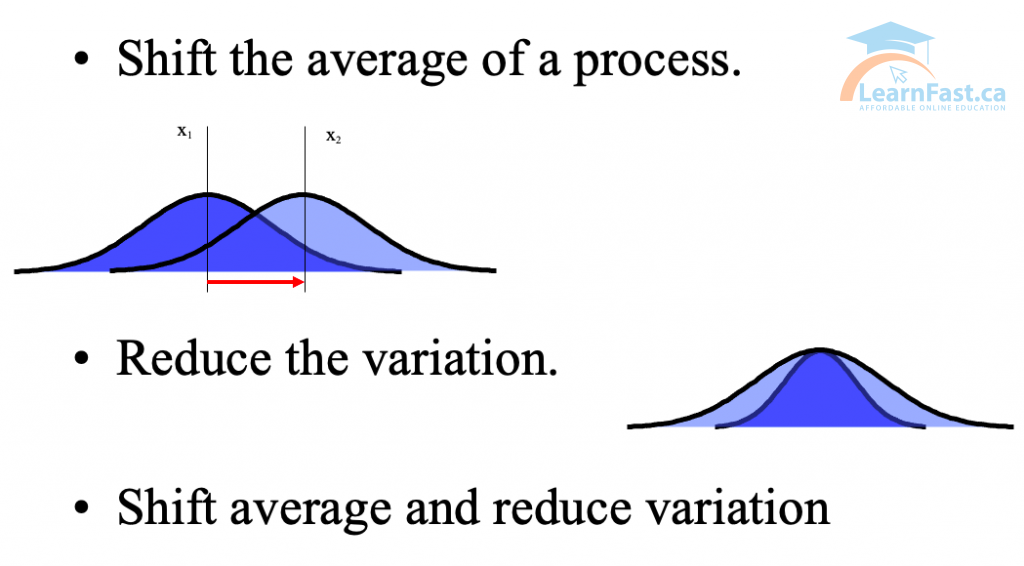

Why use DOE?

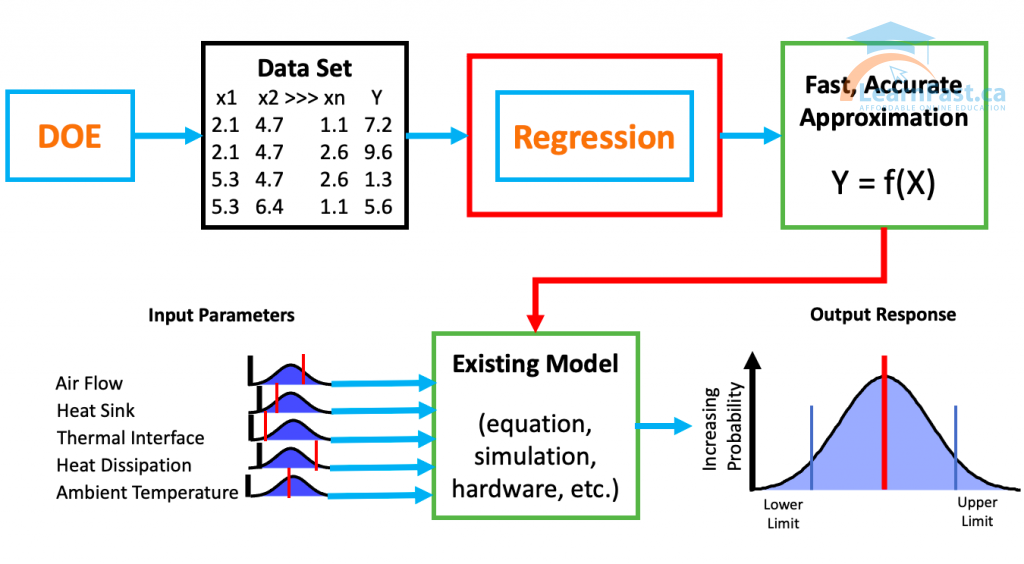

Establishing the y = f(x) Relationship

- Passive methods to learn this relationship can be used:

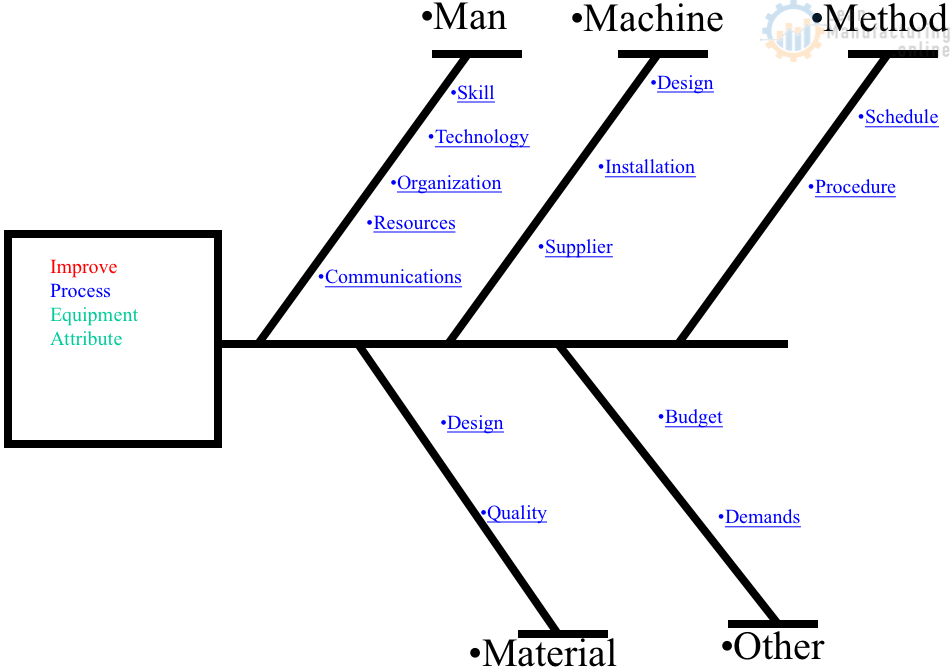

- Practical methods – Cause and Effect, brainstorming

- Graphical methods – histograms, box plots, scatter plots

- Analytical methods – regression, hypothesis testing

- Passive methods have several disadvantages:

- Correlation does not imply causation

- Trends can be incorrectly attributed to factors

- Interactions are not easy to study

- Quantification of impact and modeling is not always possible

The statistically designed experiment usually involves varying several variables at the same time and obtaining multiple measurements under an equivalent experimental conditions.

Methods of Experimentation

- Trial & Error

- Time consuming, expensive and potentially high risk

- Possible to incorrectly attribute relationship to a factor

- Difficult to quantify impact of factor and optimize process

- One Factor At A Time

- Attempt to hold all other factors constant while changing one factor of interest

- Impact of a factor not studied may be attributed to the factor being studied

- Impossible to study interactions

- Not useful to build predictive equations

Design of Experiment

A designed experiment where one or more factors, called independent variables, believed to possess an impact on the experimental outcome are identified and manipulated consistent with a predetermined plan.

- A structured investigative technique

- Based on statistics – analyzing a “system”

- Set multiple input factors (X) for each experiment and analyze result (Y)

- More efficient & more information than varying one input factor at a time (OFAT)

- DOE combinations of input factors (X’s) and results (Y’s) can be turned into mathematical models using regression techniques.

- Optimization of system

Barriers and pitfalls

- Problem not clearly stated or understood

- Objectives not clear

- DOE can be costly and/or time consuming

- Factors not clearly understood (brainstorming, expert knowledge)

- Inadequate knowledge/training to conduct sound experiment

- Expectation for instant results

- Lack of management support and understanding

10 Steps of Implementing DOE

- Determine the Problem

- Create the Objective

- Select the Input Factors (“X”s)

- Select the Output (Response)

- Choose the Factor Levels

- Select the Experiment Design

- Run Experiment – Collect the Data

- Analyze the Data

- Draw Conclusions

- Accomplish the Objective.

1. Definition of the Problem

- Macro Statement of Problem: High level statement defining the problem with quantification and effects

- Response Variable: (“Y”) Output and measurement source

- Quantification of the Problem:

- Conditions:

- Attributes impacting “Y” Variable

- Extent:

- Quantitative effects

- Performance:

- Related to CTQs

- Time Frame:

- Time of data set under study

- Specifications:

- Customer CTQs or Expectation

- Conditions:

2. Objective

- What is the relationship between the input factors (“X”s) and the output (response “Y”) factors?

- Are you trying to establish the vital few “X” from the trivial many?

- Are you interested in knowing if two input factors act together or influence the output (“Y”s)

- Are you trying to determine the optimal settings of the input factors?

3, 4. Selecting the Input Factors (“X”s) and the Output (Response)

Factors may be quantitative (variable/ continuous data) or qualitative (attribute/discrete data). Sources:

- Process Mapping

- FMEA

- Brainstorming

- Literature Review

- Scientific Theory

- Operator Experience

- Engineering Knowledge

- CSM

- Customer/Supplier Output

5. Choose the Factor Levels

- Variables data

- if the experiment is to be conducted at two different settings of a continuous variable, then the factor must have two levels, such as two temperature levels.

- Attribute data

- if the experiment is to be conducted using attribute settings (e.g. clean versus not clean)

6. Select the Experiment Design

| Data Availability | Types of Experiments |

| a lot | Full factorial studies Response surface methodology |

| some | Factorial studies, new factors, new levels |

| a little | Fractional factorials (5-20 factors), Screening studies |

7. Run Experiment – Collect the Data

Could you set high and low factors on your process, run experiments (trials), and capture the result (Y) from each of the trials? Experimental Data!

8, 9, 10 Analyze the Data. Draw Conclusions and Optimize

Definitions

- Factors: uncontrolled and controlled variables (Xs) whose influence is being studied

- Levels: The values of at which each factor will be set for the experiment. Recommended to set levels of Xs as far apart as possible

- Runs: Each experiment setting at which we will collect data. Runs determine the order in which the DOE is conducted – must always be randomized

- Full Factorial DOE: Tests all combinations of all factors; information on all factors and all interactions is obtained

- Fractional Factorial DOE: Tests a portion or “fraction” of the complete set of factor combinations; allows more factors to be studied with fewer runs.

Repetition and Replication

- Repetition: Running the same combination of the experiment during the same experimental run set up

- Used when there is not enough data and there is a time constraint

- Replication: Rerunning the same experiment at a different time

- Repeating the complete experiment

- Primary means for analyzing the stability of factor effects, and estimating “noise” in process

- Increased confidence in the results

- Can be costly; use wisely

- Most important consideration in conducting DOE

Randomization

- Technique that addresses the problem of experimental ‘noise’

- Temperature or humidity

- Batch-to-batch variation

- Position of parts in an oven etc.

- Technician or operator collecting data

- Most DOE software automatically randomize

Blocking

- Used to study the effects of ‘noise’ factors

- Removes effects from a known or potential ‘noise’ factor

- Enables industrial experiments to proceed in a practical manner

- Restrictive Blocking

- Runs are made in a restricted order, or with restricted conditions

- To isolate the effects of nuisance factors

DOE Sample Size

- Experimental Design

- Number of corner points

- Number of centre points

- ‘Effect’ relative to Std. Deviation

- Large effect (usually)

- Small number of runs

- Small effect

- Large number of runs

- Large effect (usually)

- Power of the experiment

- Low risk of Type II error

- Larger number of runs

- Low risk of Type II error